Elections & Democracy, European Policy, Free Expression

The Threat of Disinformation Looms Over EU Parliament Elections

Disinformation has proven to be a cost-effective weapon in elections that is difficult to defend against. It will likely get worse before it gets better, as all manner of legitimate content (such as biased reporting and opinion pieces) is being intermixed with the identified disinformation: verifiably false or misleading information used to harm or deceive the public. The European Parliament elections begin May 23rd and for months member countries have been preparing to fight online manipulation in the elections. Sometimes having too much warning can be counterproductive. For instance, a hurricane’s path can be predicted fairly accurately in the days before it makes landfall. That time allows emergency management personnel to prepare and activate contingency plans. It also gives the public time to experience a wide range of reactions: anxiety, appreciation, readiness, doubt, anger, and relief. Ultimately, some people don’t evacuate because they believe that “it won’t be so bad” or simply never hear the warnings. Similarly, EU citizens have been hearing warnings about expected disinformation campaigns for months and that they should be vigilant against disinformation attacks. EU Member States are determined to make sure that disinformation isn’t used as a weapon to suppress turnout and erode confidence in the 2019 European Parliament elections.

In addition to European Parliament elections, over 20 EU Member States have national parliamentary or presidential elections in 2019. The internet is involved in at least some portion of the electoral process in those countries – from registration to ballot casting to counting to disseminating results. The internet is also being used by malicious actors as a very effective conduit to deliver targeted messages to both broad and niche groups. Those messages pose a dual threat of influencing voter selections at the ballot box, as well as undermining voter confidence in the democratic electoral process itself. The challenge for officials is being able to quickly and confidently debunk disinformation messages while they are going viral and spreading across social networks and at the same time avoid restricting content that is not disinformation.

How hard can it be to spot this kind of message in the wild?

Let’s examine a particular case of disinformation to show just how difficult that task can be by exploring what makes each element of the disinformation message authentic and believable, and what kind of expertise is required to critically examine it. How hard can it be to spot this kind of message in the wild?

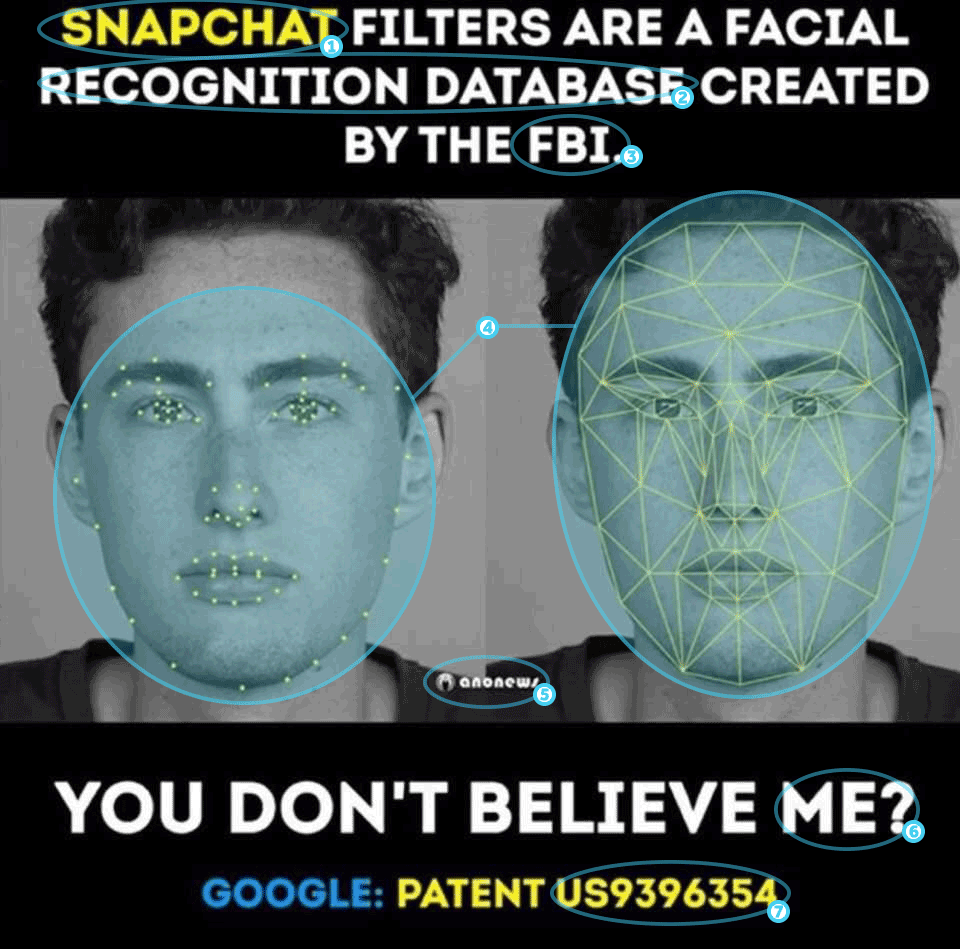

Below is an example of a disinformation message that was debunked by popular fact-checking website Snopes. This example shows how disinformation messages can be crafted to target a specific audience and take advantage of that audience’s predisposition to believing messages that reinforce their own strongly held beliefs. The influencing power of the disinformation message lies in the subtle mix of authentic and believable elements presented in an efficient and attractive wrapper – an image. An effective disinformation image can be crafted in just minutes and disseminated on social media platforms from nearly anywhere on the planet using only a mobile device.

This annotated version of the disinformation message highlights each element of the graphic in order to examine the authenticity and believability of the information presented, as well as the expertise needed to debunk the claim.

| Element | Authenticity | Believability | Expertise needed | |

| 1. | Snapchat | Social network platform known for using visual filters to change users’ facial appearance. | Amusing feature that leverages powerful image processing capability available in most phones. The plain-language Privacy Policy is still 13 pages long. |

|

| 2. | Facial recognition database | More technology companies are using FRT to power features in products such as digital assistants & advertising. The same technology is also available to law enforcement. | Private companies & individuals are deploying surveillance capability with questionable accuracy & bias without regulatory oversight. |

|

| 3. | FBI | FBI maintains a mugshot database with associated fingerprints & a criminal history record. | At least 11 states access & contribute to the mugshot database, which provide access to an unknown number of county & local law enforcement agencies. |

|

| 4. | Dots/ wireframe | Accurate visualization of how front-facing cameras & sensors use 2D images & depth maps to locate facial features. | Takes advantage of the assumption that viewers may be unlikely to challenge authenticity if it agrees with their disposition. |

|

| 5. | Anonews | Legitimate daily news website. | Popular with supporters of Anonymous movement. |

|

| 6. | Me? | Call to action challenging the viewers’ belief in the claim. | Encourages the viewer seek information to validate the presented claims. |

|

| 7. | US9396354 | The patent (awarded to Snap) describes the application of user privacy protection rules to recognized faces. | Patents are notoriously difficult for non-experts to understand. Not understanding the patent allows the viewer to continue believing the claim. |

|

Local election officials and journalists interested in debunking that message would need to rely on several domains of expertise in order to build a counter message with enough overwhelming factual backing from generally-accepted verifiable sources. This process takes a variety of resources, including expertise and time (maybe hours or even days), which allows the disinformation message to be absorbed within the target audience and spread to secondary social networks and traditional media outlets where it may influence others. It takes teamwork to identify and remove disinformation from social media platforms. And even then there is the real possibility that the disinformation message may not be debunked at all because education is a distinct process in this effort.

In response to this new reality, the European Commission has developed a strategy to counter online disinformation that recognizes the role that private companies play in addressing the problem. One component of this strategy is the Code of Practice on Disinformation. This is a commitment from private companies like Facebook, Google, Microsoft, Mozilla, and Twitter to scrutinize political and issue-based ads, while preventing platform abuse by automated bot accounts. Some companies are taking an even closer look into how disinformation is generated and how readers (who choose to) can avoid it. Mozilla is exploring the relationship between online advertising and disinformation, which is complex because disinformation is often legal content and low-quality news outlets are leveraging advertising platforms to sustain themselves while reaching targeted audiences. Microsoft provides NewsGuard as a browser extension to signal information trustworthiness to readers based on reviews by trained analysts and experienced journalists. In addition to these efforts and the Member State Action Plan Against Disinformation (to build up capabilities and strengthen cooperation), the EU also calls on private companies to look beyond Europe.

Outside of Europe, disinformation is also continuing to be a challenge for online companies. Elections recently occurred in two of the largest democracies in the world: Indonesia and India. Last month, the Indonesian election saw Facebook expand its global elections presence with the opening of operations centers in Singapore and Dublin. The goal is to bring together expertise from across products and functional teams to further develop and refine techniques and tactics to combat misinformation, hate speech, voter suppression and election interference. This necessarily requires understanding local language and customs through a combination of human and machine content moderation. For the election in India, Facebook partnered with third-party fact-checkers to cover eight of the most-spoken languages. Automatic translation of 16 languages allows a million accounts to be blocked or removed everyday using artificial intelligence and machine learning. However, the number of blocked accounts or content takedowns should not be the only metrics of a successful program. One of the advantages of operating at global scale is the capability of platforms to use these opportunities to take lessons learned in one country’s elections and incorporate them into the election protection efforts across the world at a faster pace.

Disinformation messages have proven to be effective weapons, especially in elections when legitimate candidates and campaigns are actively messaging to groups and individuals. Malicious actors have the capability to create disinformation messages and disseminate them to target audiences with relative ease compared to the expertise, coordination, and time required to identify, remove, and debunk those messages before they cause harm. The European Commission is emphasizing this danger ahead of the upcoming European Parliament elections with the goal of raising public awareness and calling on private companies to stymy the flow of disinformation messages spread by bot accounts. Unlike a hurricane, it will be difficult judge if those warnings were effective and just how much damage was caused because private companies hold this data and have only been willing to share portions of it on a voluntary basis.