Mind the Gap: Can Large Language Models Analyze Non-English Content?

Date

Time

Location

Online

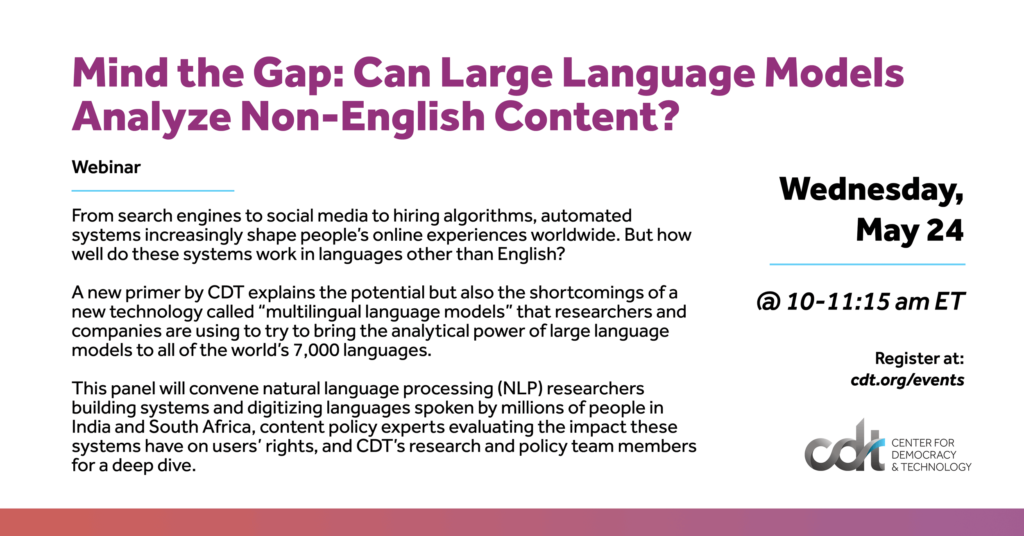

Mind the Gap: Can Large Language Models Analyze Non-English Content?

Time: 10:00 AM EDT

Date: May 24, 2023

From search engines to social media to hiring algorithms, automated systems increasingly shape people’s online experiences worldwide. Despite internet users speaking thousands of languages, most of these systems are primarily trained using English-language data. Computer scientists claim that they have found a solution to this linguistic gap in a new technology called “multilingual language models.” Multilingual language models work similarly to the language models that power new generative systems like ChatGPT, but instead of being trained on millions of examples of text in mostly one language, they pull text from dozens or hundreds of languages and learn connections between them.

But do these multilingual language models work as well as companies say they do? A new technical primer by CDT shows that these systems may have key shortcomings which only compound when used to analyze non-English languages.

This panel will convene NLP researchers building systems and digitizing languages spoken by millions of people in India and South Africa, content policy experts evaluating the impact these systems have on users’ rights, and CDT’s research and policy team members for a deep dive into how these multilingual language models work, what their capabilities and limitations are, how they can be improved, and what’s at stake when these systems fall short.

Speakers:

- Aliya Bhatia, Center for Democracy & Technology

- Gabriel Nicholas, Center for Democracy & Technology

- Dr Monojit Choudhury, Microsoft

- Dr Vukosi Marivate, University of Pretoria, Lelapa AI and Masakhane NLP

- Jacqueline Rowe, Global Partners Digital